LoRA¶

1.LoRA简介¶

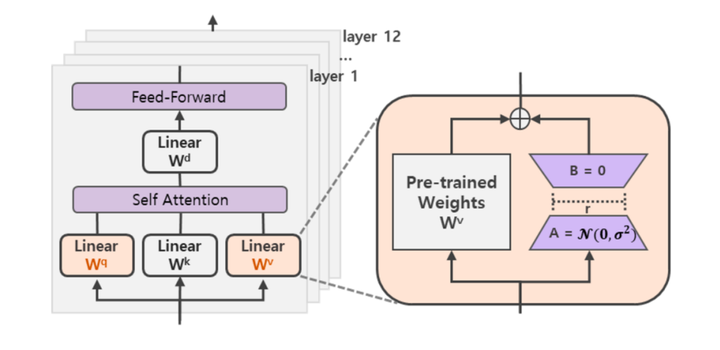

LoRA(论文:LoRA:LOW-RANK ADAPTATION OF LARGE LANGUAGE MODELS),该方法的核心思想就是通过低秩分解来模拟参数的改变量,从而以极小的参数量来实现大模型的间接训练。

在涉及到矩阵相乘的模块,在原始的PLM旁边增加一个新的通路,通过前后两个矩阵A,B相乘,第一个矩阵A负责降维,第二个矩阵B负责升维,中间层维度为r,从而来模拟所谓的本征秩(intrinsic rank)。

可训练层维度和预训练模型层维度一致为d,先将维度d通过全连接层降维至r,再从r通过全连接层映射回d维度,其中,r<<d,r是矩阵的秩,这样矩阵计算就从d x d变为d x r + r x d,参数量减少很多。

在下游任务训练时,固定模型的其他参数,只优化新增的两个矩阵的权重参数,将PLM跟新增的通路两部分的结果加起来作为最终的结果(两边通路的输入跟输出维度是一致的),即h=Wx+BAx。第一个矩阵的A的权重参数会通过高斯函数初始化,而第二个矩阵的B的权重参数则会初始化为零矩阵,这样能保证训练开始时新增的通路BA=0从而对模型结果没有影响。

$$

h=W_{0} x+\Delta W x=W_{0} x+B A x

$$

在推理时,将左右两部分的结果加到一起即可,h=Wx+BAx=(W+BA)x,所以只要将训练完成的矩阵乘积BA跟原本的权重矩阵W加到一起作为新权重参数替换原本PLM的W即可,对于推理来说,不会增加额外的计算资源。

2.微调实战¶

2.1 引入库¶

from transformers import AutoModelForCausalLM

from peft import get_peft_config, get_peft_model, get_peft_model_state_dict, LoraConfig, TaskType

import torch

from datasets import load_dataset

import os

from transformers import AutoTokenizer

from torch.utils.data import DataLoader

from transformers import default_data_collator, get_linear_schedule_with_warmup

from tqdm import tqdm

from datasets import load_dataset

2.2 创建 LoRA 微调方法对应的配置¶

device = "cuda"

model_name_or_path = "/data/nfs/llm/model/bloomz-560m"

tokenizer_name_or_path = "/data/nfs/llm/model/bloomz-560m"

peft_config = LoraConfig(

task_type=TaskType.CAUSAL_LM,

inference_mode=False,

r=8,

lora_alpha=32,

lora_dropout=0.1

)

dataset_name = "twitter_complaints"

checkpoint_name = f"{dataset_name}_{model_name_or_path}_{peft_config.peft_type}_{peft_config.task_type}_v1.pt".replace("/", "_")

text_column = "Tweet text"

label_column = "text_label"

max_length = 64

lr = 3e-2

num_epochs = 10

batch_size = 8

/home/guodong.li/virtual-venv/peft-venv-py310-cu117/lib/python3.10/site-packages/tqdm/auto.py:21:TqdmWarning:IProgress not found. Please update jupyter and ipywidgets. See https://ipywidgets.readthedocs.io/en/stable/user_install.html from .autonotebook import tqdm as notebook_tqdm

[2023-07-18 21:30:17,180] [INFO] [real_accelerator.py:133:get_accelerator] Setting ds_accelerator to cuda (auto detect)

参数说明:

task_type:指定任务类型。如:条件生成任务(SEQ_2_SEQ_LM),因果语言建模(CAUSAL_LM)等。inference_mode:是否在推理模式下使用Peft模型。r: LoRA低秩矩阵的维数。关于秩的选择,通常,使用4,8,16即可。lora_alpha: LoRA低秩矩阵的缩放系数,为一个常数超参,调整alpha与调整学习率类似。lora_dropout:LoRA 层的丢弃(dropout)率,取值范围为[0, 1)。target_modules:要替换为 LoRA 的模块名称列表或模块名称的正则表达式。针对不同类型的模型,模块名称不一样,因此,我们需要根据具体的模型进行设置,比如,LLaMa的默认模块名为[q_proj, v_proj],我们也可以自行指定为:[q_proj,k_proj,v_proj,o_proj]。 在 PEFT 中支持的模型默认的模块名如下所示:

TRANSFORMERS_MODELS_TO_LORA_TARGET_MODULES_MAPPING = {

"t5": ["q", "v"],

"mt5": ["q", "v"],

"bart": ["q_proj", "v_proj"],

"gpt2": ["c_attn"],

"bloom": ["query_key_value"],

"blip-2": ["q", "v", "q_proj", "v_proj"],

"opt": ["q_proj", "v_proj"],

"gptj": ["q_proj", "v_proj"],

"gpt_neox": ["query_key_value"],

"gpt_neo": ["q_proj", "v_proj"],

"bert": ["query", "value"],

"roberta": ["query", "value"],

"xlm-roberta": ["query", "value"],

"electra": ["query", "value"],

"deberta-v2": ["query_proj", "value_proj"],

"deberta": ["in_proj"],

"layoutlm": ["query", "value"],

"llama": ["q_proj", "v_proj"],

"chatglm": ["query_key_value"],

"gpt_bigcode": ["c_attn"],

"mpt": ["Wqkv"],

}

Transformer的权重矩阵包括Attention模块里用于计算query, key, value的Wq,Wk,Wv以及多头attention的Wo和MLP层的权重矩阵,LoRA只应用于Attention模块中的4种权重矩阵,并且通过消融实验发现同时调整 Wq 和 Wv 会产生最佳结果,因此,默认的模块名基本都为 Wq 和 Wv 权重矩阵。

from datasets import load_dataset

# dataset = load_dataset("ought/raft", dataset_name)

dataset = load_dataset("/home/guodong.li/data/peft/raft/raft.py", dataset_name, cache_dir="/home/guodong.li/data/peft/data")

classes = [k.replace("_", " ") for k in dataset["train"].features["Label"].names]

print(classes)

dataset = dataset.map(

lambda x: {"text_label": [classes[label] for label in x["Label"]]},

batched=True,

num_proc=1,

)

print(dataset)

dataset["train"][0]

Found cached dataset raft (/home/guodong.li/data/peft/data/raft/twitter_complaints/1.1.0/79c4de1312c1e3730043f7db07179c914f48403101f7124e2fe336f6f54d9f84) 100%|██████████| 2/2 [00:00<00:00, 847.76it/s] Loading cached processed dataset at /home/guodong.li/data/peft/data/raft/twitter_complaints/1.1.0/79c4de1312c1e3730043f7db07179c914f48403101f7124e2fe336f6f54d9f84/cache-0e20fff6b1d898ca.arrow Loading cached processed dataset at /home/guodong.li/data/peft/data/raft/twitter_complaints/1.1.0/79c4de1312c1e3730043f7db07179c914f48403101f7124e2fe336f6f54d9f84/cache-8d14a62b8a688c19.arrow

['Unlabeled', 'complaint', 'no complaint']

DatasetDict({

train:Dataset({

features:['Tweet text', 'ID', 'Label', 'text_label'],

num_rows:50

})

test:Dataset({

features:['Tweet text', 'ID', 'Label', 'text_label'],

num_rows:3399

})

})

{'Tweet text':'@HMRCcustomers No this is my first job',

'ID':0,

'Label':2,

'text_label':'no complaint'}

# data preprocessing

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path)

if tokenizer.pad_token_id is None:

tokenizer.pad_token_id = tokenizer.eos_token_id

target_max_length = max([len(tokenizer(class_label)["input_ids"]) for class_label in classes])

print("target_max_length:", target_max_length)

def preprocess_function(examples):

batch_size = len(examples[text_column])

inputs = [f"{text_column} :{x} Label :" for x in examples[text_column]]

targets = [str(x) for x in examples[label_column]]

model_inputs = tokenizer(inputs)

labels = tokenizer(targets)

for i in range(batch_size):

sample_input_ids = model_inputs["input_ids"][i]

label_input_ids = labels["input_ids"][i] + [tokenizer.pad_token_id]

# print(i, sample_input_ids, label_input_ids)

model_inputs["input_ids"][i] = sample_input_ids + label_input_ids

labels["input_ids"][i] = [-100] * len(sample_input_ids) + label_input_ids

model_inputs["attention_mask"][i] = [1] * len(model_inputs["input_ids"][i])

# print(model_inputs)

for i in range(batch_size):

sample_input_ids = model_inputs["input_ids"][i]

label_input_ids = labels["input_ids"][i]

model_inputs["input_ids"][i] = [tokenizer.pad_token_id] * (

max_length - len(sample_input_ids)

) + sample_input_ids

model_inputs["attention_mask"][i] = [0] * (max_length - len(sample_input_ids)) + model_inputs[

"attention_mask"

][i]

labels["input_ids"][i] = [-100] * (max_length - len(sample_input_ids)) + label_input_ids

model_inputs["input_ids"][i] = torch.tensor(model_inputs["input_ids"][i][:max_length])

model_inputs["attention_mask"][i] = torch.tensor(model_inputs["attention_mask"][i][:max_length])

labels["input_ids"][i] = torch.tensor(labels["input_ids"][i][:max_length])

model_inputs["labels"] = labels["input_ids"]

return model_inputs

processed_datasets = dataset.map(

preprocess_function,

batched=True,

num_proc=1,

remove_columns=dataset["train"].column_names,

load_from_cache_file=False,

desc="Running tokenizer on dataset",

)

train_dataset = processed_datasets["train"]

eval_dataset = processed_datasets["train"]

train_dataloader = DataLoader(train_dataset, shuffle=True, collate_fn=default_data_collator, batch_size=batch_size, pin_memory=True)

eval_dataloader = DataLoader(eval_dataset, collate_fn=default_data_collator, batch_size=batch_size, pin_memory=True)

target_max_length:3

def test_preprocess_function(examples):

batch_size = len(examples[text_column])

inputs = [f"{text_column} :{x} Label :" for x in examples[text_column]]

model_inputs = tokenizer(inputs)

# print(model_inputs)

for i in range(batch_size):

sample_input_ids = model_inputs["input_ids"][i]

model_inputs["input_ids"][i] = [tokenizer.pad_token_id] * (max_length - len(sample_input_ids)) + sample_input_ids

model_inputs["attention_mask"][i] = [0] * (max_length - len(sample_input_ids)) + model_inputs["attention_mask"][i]

model_inputs["input_ids"][i] = torch.tensor(model_inputs["input_ids"][i][:max_length])

model_inputs["attention_mask"][i] = torch.tensor(model_inputs["attention_mask"][i][:max_length])

return model_inputs

test_dataset = dataset["test"].map(

test_preprocess_function,

batched=True,

num_proc=1,

remove_columns=dataset["train"].column_names,

load_from_cache_file=False,

desc="Running tokenizer on dataset",

)

test_dataloader = DataLoader(test_dataset, collate_fn=default_data_collator, batch_size=batch_size, pin_memory=True)

next(iter(test_dataloader))

{'input_ids':tensor([[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

227985, 5484, 915, 2566, 74757, 64626, 12384, 44639, 613,

52282, 2670, 79920, 3344, 1002, 368, 17646, 14472, 8348,

664, 718, 4, 19036, 17, 31849, 17, 6312, 76,

44, 62470, 56, 91, 50, 14839, 21, 77658, 915,

210],

[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 227985, 5484, 915, 405, 187059,

2256, 664, 2550, 18833, 18607, 162467, 4, 1387, 6199,

3291, 23405, 613, 4657, 17082, 566, 3432, 368, 78851,

1185, 61273, 23181, 1553, 15596, 212, 116057, 77658, 915,

210],

[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 227985, 5484,

915, 39762, 2566, 22253, 6201, 75701, 15, 632, 718,

5840, 10006, 6201, 18881, 427, 3804, 19528, 267, 158974,

1320, 368, 10029, 632, 49666, 92, 34, 77658, 915,

210],

[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 227985, 5484, 915, 2566, 104565, 8695, 2089, 6140,

109676, 99579, 1369, 512, 368, 4570, 54, 632, 368,

1503, 241485, 132226, 15, 982, 727, 1152, 18100, 861,

32596, 77597, 168154, 1306, 132226, 4346, 87843, 17, 130462,

364, 32923, 89, 53, 8309, 20, 75, 77658, 915,

210],

[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 227985, 5484, 915, 2566,

14173, 2960, 29906, 387, 20706, 49337, 1369, 77658, 915,

210],

[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 227985, 5484, 915, 2566, 219553, 45736,

36876, 1713, 72, 707, 187205, 13002, 177324, 77658, 915,

210],

[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 227985, 5484, 915, 2566, 233938, 28518, 13716,

427, 28146, 1119, 17918, 17, 236706, 368, 214997, 7555,

48659, 5276, 21600, 343, 17, 51416, 22403, 318, 1531,

1306, 1130, 20934, 567, 101161, 184849, 87843, 17, 1594,

15231, 2052, 16642, 20, 7180, 80, 26, 77658, 915,

210],

[ 3, 3, 3, 3, 3, 3, 3, 3, 3,

3, 3, 3, 3, 3, 3, 3, 3, 3,

227985, 5484, 915, 2566, 80, 2068, 479, 2566, 80,

1376, 878, 147587, 3904, 632, 368, 6084, 65673, 78851,

11736, 15527, 19082, 33151, 461, 17, 45575, 17887, 632,

5219, 14216, 68870, 5967, 1841, 4346, 87843, 17, 1594,

14512, 27, 71, 8184, 19, 290, 63748, 77658, 915,

210]]),

'attention_mask':tensor([[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1]])}

2.3 通过调用 get_peft_model 方法包装基础的 Transformer 模型¶

model = AutoModelForCausalLM.from_pretrained(model_name_or_path)

通过 `print_trainable_parameters`` 方法可以查看到 LoRA 可训练参数的数量(仅为786,432)以及占比(仅为0.1404%)。

model = get_peft_model(model, peft_config)

model.print_trainable_parameters()

trainable params:786,432 || all params:560,001,024 || trainable%:0.14043402892063284

LoRA 模型类结构如下所示:

model

PeftModelForCausalLM(

(base_model):LoraModel(

(model):BloomForCausalLM(

(transformer):BloomModel(

(word_embeddings):Embedding(250880, 1024)

(word_embeddings_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(h):ModuleList(

(0):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(1):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(2):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(3):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(4):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(5):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(6):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(7):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(8):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(9):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(10):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(11):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(12):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(13):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(14):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(15):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(16):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(17):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(18):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(19):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(20):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(21):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(22):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

(23):BloomBlock(

(input_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(self_attention):BloomAttention(

(query_key_value):Linear(

in_features=1024, out_features=3072, bias=True

(lora_dropout):ModuleDict(

(default):Dropout(p=0.1, inplace=False)

)

(lora_A):ModuleDict(

(default):Linear(in_features=1024, out_features=8, bias=False)

)

(lora_B):ModuleDict(

(default):Linear(in_features=8, out_features=3072, bias=False)

)

(lora_embedding_A):ParameterDict()

(lora_embedding_B):ParameterDict()

)

(dense):Linear(in_features=1024, out_features=1024, bias=True)

(attention_dropout):Dropout(p=0.0, inplace=False)

)

(post_attention_layernorm):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

(mlp):BloomMLP(

(dense_h_to_4h):Linear(in_features=1024, out_features=4096, bias=True)

(gelu_impl):BloomGelu()

(dense_4h_to_h):Linear(in_features=4096, out_features=1024, bias=True)

)

)

)

(ln_f):LayerNorm((1024,), eps=1e-05, elementwise_affine=True)

)

(lm_head):Linear(in_features=1024, out_features=250880, bias=False)

)

)

)

model.peft_config

{'default':LoraConfig(peft_type=<PeftType.LORA:'LORA'>, auto_mapping=None, base_model_name_or_path='/data/nfs/llm/model/bloomz-560m', revision=None, task_type=<TaskType.CAUSAL_LM:'CAUSAL_LM'>, inference_mode=False, r=8, target_modules=['query_key_value'], lora_alpha=32, lora_dropout=0.1, fan_in_fan_out=False, bias='none', modules_to_save=None, init_lora_weights=True, layers_to_transform=None, layers_pattern=None)}

2.4 模型训练¶

模型训练的其余部分均无需更改,当模型训练完成之后,保存高效微调部分的模型权重以供模型推理即可。

# model

# optimizer and lr scheduler

optimizer = torch.optim.AdamW(model.parameters(), lr=lr)

lr_scheduler = get_linear_schedule_with_warmup(

optimizer=optimizer,

num_warmup_steps=0,

num_training_steps=(len(train_dataloader) * num_epochs),

)

# training and evaluation

model = model.to(device)

for epoch in range(num_epochs):

model.train()

total_loss = 0

for step, batch in enumerate(tqdm(train_dataloader)):

batch = {k: v.to(device) for k, v in batch.items()}

# print(batch)

# print(batch["input_ids"].shape)

outputs = model(**batch)

loss = outputs.loss

total_loss += loss.detach().float()

loss.backward()

optimizer.step()

lr_scheduler.step()

optimizer.zero_grad()

model.eval()

eval_loss = 0

eval_preds = []

for step, batch in enumerate(tqdm(eval_dataloader)):

batch = {k: v.to(device) for k, v in batch.items()}

with torch.no_grad():

outputs = model(**batch)

loss = outputs.loss

eval_loss += loss.detach().float()

eval_preds.extend(

tokenizer.batch_decode(torch.argmax(outputs.logits, -1).detach().cpu().numpy(), skip_special_tokens=True)

)

eval_epoch_loss = eval_loss / len(eval_dataloader)

eval_ppl = torch.exp(eval_epoch_loss)

train_epoch_loss = total_loss / len(train_dataloader)

train_ppl = torch.exp(train_epoch_loss)

print(f"{epoch=}:{train_ppl=} {train_epoch_loss=} {eval_ppl=} {eval_epoch_loss=}")

100%|██████████| 7/7 [00:01<00:00, 4.49it/s] 100%|██████████| 7/7 [00:00<00:00, 22.94it/s]

epoch=0:train_ppl=tensor(7.0119e+25, device='cuda:0') train_epoch_loss=tensor(59.5122, device='cuda:0') eval_ppl=tensor(2.6500e+13, device='cuda:0') eval_epoch_loss=tensor(30.9082, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.16it/s] 100%|██████████| 7/7 [00:00<00:00, 22.95it/s]

epoch=1:train_ppl=tensor(4.4497e+11, device='cuda:0') train_epoch_loss=tensor(26.8213, device='cuda:0') eval_ppl=tensor(59509564., device='cuda:0') eval_epoch_loss=tensor(17.9016, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 10.94it/s] 100%|██████████| 7/7 [00:00<00:00, 23.06it/s]

epoch=2:train_ppl=tensor(6.5751e+09, device='cuda:0') train_epoch_loss=tensor(22.6066, device='cuda:0') eval_ppl=tensor(4530818.5000, device='cuda:0') eval_epoch_loss=tensor(15.3264, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.04it/s] 100%|██████████| 7/7 [00:00<00:00, 22.90it/s]

epoch=3:train_ppl=tensor(6.5462e+08, device='cuda:0') train_epoch_loss=tensor(20.2996, device='cuda:0') eval_ppl=tensor(9.2159e+08, device='cuda:0') eval_epoch_loss=tensor(20.6416, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.15it/s] 100%|██████████| 7/7 [00:00<00:00, 23.07it/s]

epoch=4:train_ppl=tensor(61906716., device='cuda:0') train_epoch_loss=tensor(17.9411, device='cuda:0') eval_ppl=tensor(1936483.3750, device='cuda:0') eval_epoch_loss=tensor(14.4764, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.15it/s] 100%|██████████| 7/7 [00:00<00:00, 23.02it/s]

epoch=5:train_ppl=tensor(155084.5781, device='cuda:0') train_epoch_loss=tensor(11.9517, device='cuda:0') eval_ppl=tensor(7552.6094, device='cuda:0') eval_epoch_loss=tensor(8.9296, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.14it/s] 100%|██████████| 7/7 [00:00<00:00, 23.05it/s]

epoch=6:train_ppl=tensor(5289.7910, device='cuda:0') train_epoch_loss=tensor(8.5735, device='cuda:0') eval_ppl=tensor(2450.5276, device='cuda:0') eval_epoch_loss=tensor(7.8041, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.08it/s] 100%|██████████| 7/7 [00:00<00:00, 22.89it/s]

epoch=7:train_ppl=tensor(2616.7517, device='cuda:0') train_epoch_loss=tensor(7.8697, device='cuda:0') eval_ppl=tensor(2140.2886, device='cuda:0') eval_epoch_loss=tensor(7.6687, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.16it/s] 100%|██████████| 7/7 [00:00<00:00, 22.97it/s]

epoch=8:train_ppl=tensor(1483.9406, device='cuda:0') train_epoch_loss=tensor(7.3025, device='cuda:0') eval_ppl=tensor(1361.1847, device='cuda:0') eval_epoch_loss=tensor(7.2161, device='cuda:0')

100%|██████████| 7/7 [00:00<00:00, 11.17it/s] 100%|██████████| 7/7 [00:00<00:00, 22.96it/s]

epoch=9:train_ppl=tensor(1103.2817, device='cuda:0') train_epoch_loss=tensor(7.0060, device='cuda:0') eval_ppl=tensor(1395.4637, device='cuda:0') eval_epoch_loss=tensor(7.2410, device='cuda:0')

# 模型评估

model.eval()

i = 16

inputs = tokenizer(f'{text_column} :{dataset["test"][i]["Tweet text"]} Label :', return_tensors="pt")

print(dataset["test"][i]["Tweet text"])

print(inputs)

with torch.no_grad():

inputs = {k: v.to(device) for k, v in inputs.items()}

outputs = model.generate(

input_ids=inputs["input_ids"], attention_mask=inputs["attention_mask"], max_new_tokens=10, eos_token_id=3

)

print(outputs)

print(tokenizer.batch_decode(outputs.detach().cpu().numpy(), skip_special_tokens=True))

Hey @nytimes your link to cancel my subscription isn't working and nobody is answering the chat. Please don't play that kind of stupid game.

{'input_ids':tensor([[227985, 5484, 915, 54078, 2566, 7782, 24502, 2632, 8989,

427, 36992, 2670, 140711, 21994, 10789, 530, 88399, 632,

183542, 368, 44799, 17, 29901, 5926, 7229, 861, 11596,

461, 78851, 14775, 17, 77658, 915, 210]]), 'attention_mask':tensor([[1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,

1, 1, 1, 1, 1, 1, 1, 1, 1, 1]])}

tensor([[227985, 5484, 915, 54078, 2566, 7782, 24502, 2632, 8989,

427, 36992, 2670, 140711, 21994, 10789, 530, 88399, 632,

183542, 368, 44799, 17, 29901, 5926, 7229, 861, 11596,

461, 78851, 14775, 17, 77658, 915, 210, 1936, 1936,

1936, 1936, 1936, 1936, 1936, 1936, 1936, 1936]],

device='cuda:0')

["Tweet text :Hey @nytimes your link to cancel my subscription isn't working and nobody is answering the chat. Please don't play that kind of stupid game. Label :nononononononononono"]

# saving model

peft_model_id = f"{model_name_or_path}_{peft_config.peft_type}_{peft_config.task_type}"

print("model_output:", peft_model_id)

model.save_pretrained(peft_model_id)

model_output:/data/nfs/llm/model/bloomz-560m_LORA_CAUSAL_LM

输出的模型权重文件如下所示:

/data/nfs/llm/model/bloomz-560m_LORA_CAUSAL_LM

├── [ 447] adapter_config.json

├── [3.0M] adapter_model.bin

└── [ 147] README.md

0 directories, 3 files

注意:这里只会保存经过训练的增量 PEFT 权重。其中,adapter_config.json 为 LoRA 配置文件;adapter_model.bin 为 LoRA 权重文件。

ckpt = f"{peft_model_id}/adapter_model.bin"

!du -h $ckpt

print("--------------")

!tree -h $peft_model_id

huggingface/tokenizers:The current process just got forked, after parallelism has already been used. Disabling parallelism to avoid deadlocks... To disable this warning, you can either: - Avoid using `tokenizers` before the fork if possible - Explicitly set the environment variable TOKENIZERS_PARALLELISM=(true | false) 3.1M /data/nfs/llm/model/bloomz-560m_LORA_CAUSAL_LM/adapter_model.bin -------------- huggingface/tokenizers:The current process just got forked, after parallelism has already been used. Disabling parallelism to avoid deadlocks... To disable this warning, you can either: - Avoid using `tokenizers` before the fork if possible - Explicitly set the environment variable TOKENIZERS_PARALLELISM=(true | false) /data/nfs/llm/model/bloomz-560m_LORA_CAUSAL_LM ├── [ 447] adapter_config.json ├── [3.0M] adapter_model.bin └── [ 147] README.md 0 directories, 3 files

2.5 加载微调后的权重文件进行推理¶

from peft import PeftModel, PeftConfig

peft_model_id = f"{model_name_or_path}_{peft_config.peft_type}_{peft_config.task_type}"

print("model_input:", peft_model_id)

config = PeftConfig.from_pretrained(peft_model_id)

model = AutoModelForCausalLM.from_pretrained(config.base_model_name_or_path)

model = PeftModel.from_pretrained(model, peft_model_id)

model_input:/data/nfs/llm/model/bloomz-560m_LORA_CAUSAL_LM

model.to(device)

model.eval()

i = 4

inputs = tokenizer(f'{text_column} :{dataset["test"][i]["Tweet text"]} Label :', return_tensors="pt")

print(dataset["test"][i]["Tweet text"])

print(inputs)

with torch.no_grad():

inputs = {k: v.to(device) for k, v in inputs.items()}

outputs = model.generate(

input_ids=inputs["input_ids"], attention_mask=inputs["attention_mask"], max_new_tokens=10, eos_token_id=3

)

print(outputs)

print(tokenizer.batch_decode(outputs.detach().cpu().numpy(), skip_special_tokens=True))

@greateranglia Ok thanks...

{'input_ids':tensor([[227985, 5484, 915, 2566, 14173, 2960, 29906, 387, 20706,

49337, 1369, 77658, 915, 210]]), 'attention_mask':tensor([[1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1]])}

tensor([[227985, 5484, 915, 2566, 14173, 2960, 29906, 387, 20706,

49337, 1369, 77658, 915, 210, 1936, 1936, 1936, 1936,

1936, 1936, 1936, 1936, 1936, 1936]], device='cuda:0')

['Tweet text :@greateranglia Ok thanks... Label :nononononononononono']